Posts by Flyboy

-

-

-

If you are running windows, you can try running HediSQL https://www.heidisql.com/ to see if you can connect to your mysql database with the username and password you are putting in the config.

Just to see if the basics are correct.

If that doesn't work, start by figuring that out and how to set up the right credentials.

-

Try clicking "Make mysql database"

The database needs to be created before you can connect to it.

-

Did a bunch more testing to get to the bottom of this, because in our logs we are missing weeks of data at a time.

The problem seems to happen when the interface is open and you log out of windows.

Then windows is responsible for closing the window and the underlying process and that is when it does not revert back to logging directly to the logfile and no logs get generated any more.

This until the next time someone opens the interface. (or I guess reboot, but not able to test this at the moment)

So opening the interface and manually closing it, then logging out of windows works.

But opening the interface and then logging out of windows breaks the logging.

Is is possible for Conquest to always write to the logfile and have the interface just show it in log window like a tail-f (on linux) or what baretail does on windows?

Or is there a different way to detect the logoff process?

-

Below is an example of things we see.

I think it tends to happen if we close the interface but don't log out of windows.

Although not sure exactly, will keep an eye on it. Sometimes it doesn't log for a week on end, then it will work as normal until the next login, then it will fail again.

Really strange.

-

We're running 1.5.0b and I'm having an issue where Conquest only writes to the logfile when the interface is open

When we close the interface and log out of the server (Conquest is running as a service) no data gets added to the serverstatus.log file any more

the pacstrouble.log and pacsuser.log are working correctly

None of the cleanup jobs run either, so they are not getting zipped up and archived.

This on 4 servers, all 2012R2, Mysql and Conquest 1.5.0b

-

I tested the executable and this solves my 2 issues.

My previous images are no longer blue and my new ones are getting compressed again (where 1.5.0a did not compress them)

When you say this needs more thought, does that mean we should not be using this executable in production or is it just that you want to rethink something for the future, but we can use this one for now?

I rather not have to go down to 1.4.19c because we'll lose the performance gains from the newer versions

thanks again, you are a lifesaver!

-

Guess messaging is no longer an option.

I emailed them over.

-

This is running on Windows 2012R2

Old version was 1.4.19b (all files were upgraded to 1.4.19d, but then the dgate64.exe and related files rolled back to the b version)

I will send the image to you in DM

If I copy the dcm file out and open it in an external viewer without going through conquest, it looks fine

-

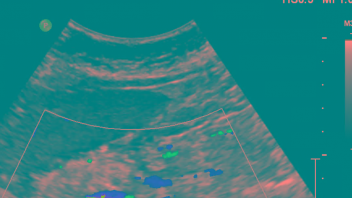

We just upgraded to 1.5a and got reports from our techs that old color images are showing up wrong now.

They all seem to have a blueish overlay on them. This also looks like this in the imageviewer on Conquest itself.

Strange thing is, this is only happening to old images, new images that are being saved to the server now are showing up correctly when they are retrieved.

example:

Doesn't matter if we open these on the server, KPACS, IQview, external reading station, all show up the same

On the server everything is stored in j2 and when sending I have tried sending to the clients as j2 and un, both with the weird colors as a result.

Anyone have any ideas on this one?

-

In the exportconverter or importconverter you should be able to do: sleep(20000) forward to aetitle

I believe this should wait 20 seconds before sending the images

Or you can do: forward study to aetitle

that way Conquest will wait for the whole study to arrive before forwarding it, preventing simultaneous receiving and sending operations

-

We found it best for the forwarding situations to still have a small local database.

Specially if your target is offline or there are connection issues so the images can queue up.

So we have set up a small volume for our conquest "router" of 10 or 25gb depending on how busy the location is.

It is working as a normal server with an exportconverter, since that allows for the queueing if needed and then we have Conquest set up with disk space limits, so it will dump the older images as the new ones come in.

-

We can send the same images between multiple Conquest servers, to KPACS and IQview without any problems.

Just to this one server it is causing issues

I tried even doubling the server memory to 32GB, but doesn't make a difference. Not coming anywhere close to using all the memory.

This is the debug output:

Display MoreCode6/25/2019 4:04:06 PM [CONQUEST_PACS] Testing transfer: '1.2.840.10008.1.2.1' against list #0 = '1.2.840.10008.1.2'6/25/2019 4:04:06 PM [CONQUEST_PACS] Testing transfer: '1.2.840.10008.1.2.1' against list #1 = '1.2.840.10008.1.2.1'6/25/2019 4:04:07 PM [CONQUEST_PACS] Application Context : "1.2.840.10008.3.1.1.1", PDU length: 642346/25/2019 4:04:07 PM [CONQUEST_PACS] 0000,0002 28 UI AffectedSOPClassUID "1.2.840.10008.5.1.4.1.2.2.2"6/25/2019 4:04:07 PM [CONQUEST_PACS] Issue Query on Columns: DICOMImages.SOPClassUI, DICOMImages.SOPInstanc, DICOMSeries.SeriesInst, DICOMStudies.StudyInsta,DICOMImages.ObjectFile,DICOMImages.DeviceName6/25/2019 4:04:07 PM [CONQUEST_PACS] Values: DICOMStudies.StudyInsta = 'xxxxx' and DICOMSeries.StudyInsta = DICOMStudies.StudyInsta and DICOMImages.SeriesInst = DICOMSeries.SeriesInst6/25/2019 4:04:07 PM [CONQUEST_PACS] Locating file:MAG0 xxxxx\1.2.840.113681.167838594.1559718708.2040.1474_71300000_000152_1559779796269c.dcm6/25/2019 4:04:07 PM [CONQUEST_PACS] Locating file:MAG0 xxxxx\1.2.840.113681.167838594.1559718708.2040.1480.1_73200000_000154_155977983626bc.dcm6/25/2019 4:04:07 PM [CONQUEST_PACS] Locating file:MAG0 xxxxx\1.2.840.113681.167838594.1559718708.2040.1486_71300000_000146_1559779756266d.dcm6/25/2019 4:04:07 PM [CONQUEST_PACS] Locating file:MAG0 xxxxx\1.2.840.113681.167838594.1559718708.2040.1492.1_73200000_000148_15597797942695.dcm6/25/2019 4:04:07 PM [CONQUEST_PACS] Locating file:MAG0 xxxxx\1.2.840.113681.167838594.1559718708.2040.1498_71300000_000140_15597797212615.dcm6/25/2019 4:04:07 PM [CONQUEST_PACS] Locating file:MAG0 xxxxx\1.2.840.113681.167838594.1559718708.2040.1504.1_73200000_000142_15597797542665.dcm6/25/2019 4:04:07 PM [CONQUEST_PACS] Locating file:MAG0 xxxxx\1.2.840.113986.2.2322045632.20190605.102456.130.2512_0002_000001_155977983926bf.dcm6/25/2019 4:04:07 PM [CONQUEST_PACS] Locating file:MAG0 xxxxx\1.3.6.1.4.1.34261.57277341658132.6516.1559741858.0_0001_000001_15597799502751.dcm6/25/2019 4:04:07 PM [CONQUEST_PACS] Sending file : e:\CONQUEST_PACS\xxxxx\xxxxx_000158_155977983926be.dcm6/25/2019 4:04:07 PM [CONQUEST_PACS] Debug[DecompressJPEGL]: H = 4096, W = 3328, Bits = 12 in 16, Components = 1, Frames = 16/25/2019 4:04:08 PM [CONQUEST_PACS] Sending file : e:\CONQUEST_PACS\xxxxx\xxxxx_000160_15597798712722.dcm6/25/2019 4:04:08 PM [CONQUEST_PACS] Debug[DecompressJPEGL]: H = 2457, W = 1996, Bits = 10 in 16, Components = 1, Frames = 84Looks from here the decompress might be taking longer than the timeout on the receiving end.

While the job is running one of the CPU cores seems to be maxed out and some memory being used (some being 1-3GB) -> see screenshot attached

So the first spike in traffic seems to be the first image which converts really quickly, followed by nothing while it is waiting on image conversion if I'm reading this correct. And the receiving end times out while waiting.

Guessing there is no flag for multi-core compressing and decompressing?

Also tried disabling read-ahead since I thought it might have been converting multiple things in memory, but that doesn't seem to be the case and did not help.

I'm guessing my options now are to ask the receiving end to increase their time-outs or to invest in a faster CPU.

-

Yes, the studies we are sending are 3D mammograms, so around 800MB a piece or more.

They are being converted from j2 to ul or un.

When I try to pass them off as j2 to the receiving party it still does a conversion to j1.

So there is always a conversion going on, but I didn't think the decompressing of a single image took 15 seconds, causing the timeout. The server has enough horsepower to do it pretty quickly. Is there anyway to check on those timings?

-

I have come across this as well.

We are storing everything in j2 compression, which is a lot smaller than uncompressed. But some of our review stations and clients have issues with certain images, so the only good way for us to send them is uncompressed.

In the local office, that is no issue, but for remote sites this was unworkable.

So to fix this, we set up a Conquest server on each of the machines that will accept all images as j2, and forward them uncompressed to client.

Works very well for us and sped up transfers a lot.

-

At the blue mark on the screenshot, is where the remote server sent an abort command due to time-out.

Before the abort command it looks to me that one of the images finishes transferring (line 14984)

After which the next one starts (line 15985)

Followed by the transfer and ackn of 1 packet, after which everything stops until the remote server times out and cancels the transfer.

Looks like Conquest just stops sending for some reason, but can't find anything in its logs, not even with full debug enabled

-

The d2 release is acting like the b version again.

So our normal images are transferring.

I'm still having an issue transferring large BTO images from Conquest to a new cloud gateway we are dealing with.

It is failing due to a read time-out on the gateway side.

in 1.4.19b and now 1.4.19d2 this is behaving the same way now.

I attached an image of the traffic captured during the transfer.

All of a sudden, there is a gap there, which causes the receiving end to time-out and cancel the transfer.

In Dicom there is nothing in the logs even with debug set to the max level.

Both machines are on the same virtual hosts, so there is no packet loss or firewall rules in play here.

I even turned all the offloading options, ... off on the NIC to ensure that isn't interfering.

Since we do not have any other issues with other vendors and other systems, I always thought it was the receiving end, but seeing the wireshark captures now is not showing any new images being sent from Conquest, so guessing it might be the sending side now.

Anything you can see or think off?

-

I wanted to come back to an issue first brought up in the 1.4.19d1 thread.

When we are transferring images from Conquest 1.4.19b we can send things to a GE linux pacs server without any problems

But when going to 1.4.19d1 we see the following appear in the logs and transfers are cancelled:

Quote2019-06-19 15:58:57 EDT ERROR-|DicomServiceTemplate:271| Exception in creating dicom dataSpecified length (27269900) of PDU exceeds limit: 1048576

java.io.IOException: Specified length (27269900) of PDU exceeds limit: 1048576

Reverting back to the old version fixes the problem again.

To try and see what might be going on, I took some captures with Wireshark and I'm noticing a difference in the traffic flow.

While the old version is putting in small PDU segments, the new one seems to throw everything under 1 large transfer as far as I can tell.

Screenshots attached so someone more knowledgeable can have a look and hopefully let me know what is going on here.

Let me know if more info is needed.

Thanks in advance

-

Is there anything I can change on Windows to test things?